Before you paste care notes into NotebookLM

I love Google NotebookLM and it is one of the most genuinely useful AI tools to emerge in recent years — upload a pile of documents, ask nuanced questions, and it synthesises answers with source citations. This powerful tool come at a cost - you are exchanging your data for insights. This post walks through exactly what the terms say, why it matters for anyone operating in aged care, the NDIS, or any healthcare-adjacent context, and what you should do about it.

Do not use Google NotebookLM with any information that identifies a care recipient, contains health details, or could be considered Protected Health Information (PHI) — unless you have a signed HIPAA Business Associate Agreement (BAA) in place with Google, and even then, NotebookLM is explicitly excluded from that agreement.

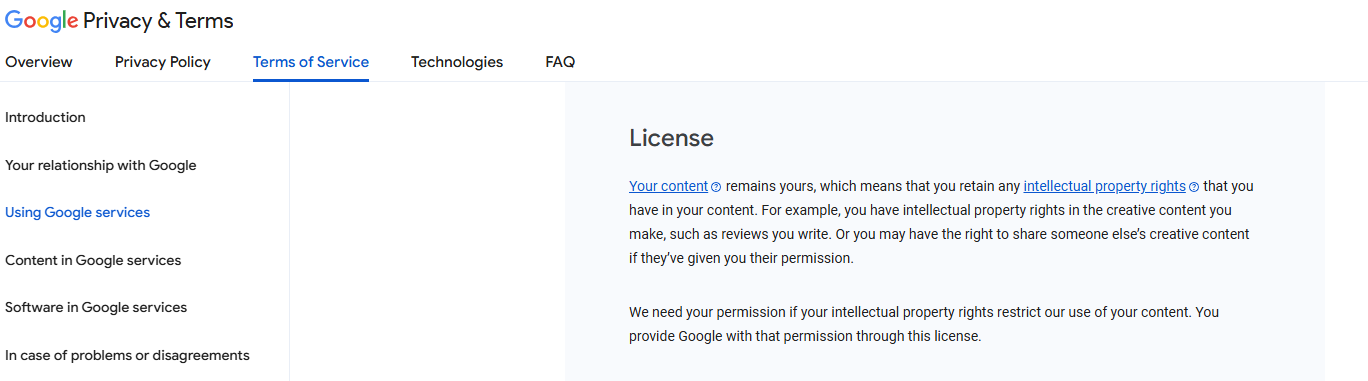

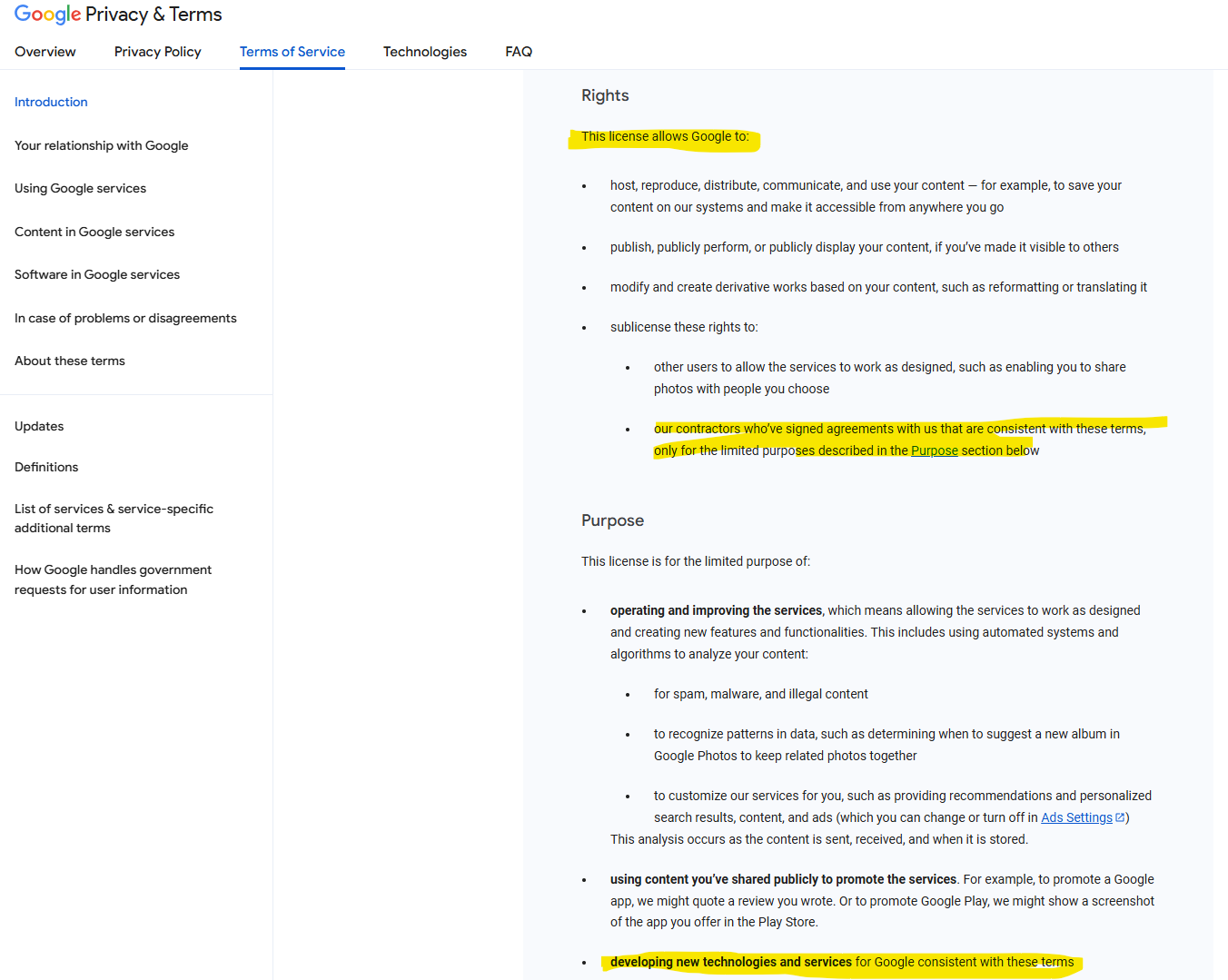

If you access NotebookLM through a personal Google account, or through a Google Workspace edition that does not include HIPAA coverage, you are governed by the standard Google Terms of Service. Under those terms, when you provide content to Google's services, you grant Google a broad licence to host, reproduce, distribute, modify, and use that content — including to operate and improve their services, which explicitly covers using automated systems to analyse your content. Google can also sublicense these rights to contractors.

If a support worker pastes a progress note into free-tier NotebookLM — even just to summarise it — that clinical narrative about a real person's daily functioning, behaviour, medical history, or disability support needs can feed Google's model training pipeline. It is written into the terms you agree to when you use the service.

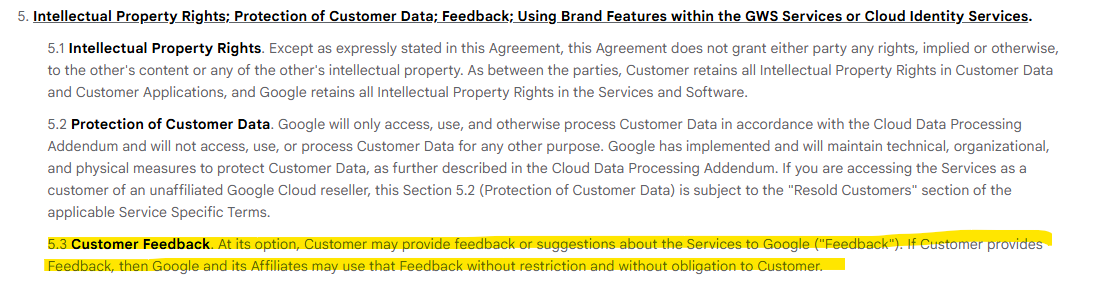

Paid Google Workspace customers get stronger protections under the Google Workspace Terms of Service. Crucially, Section 5.2 (Protection of Customer Data) states that Google will only process Customer Data in accordance with the Cloud Data Processing Addendum — not for other purposes. Section 5.3 on Customer Feedback is worth noting too: if you voluntarily submit feedback about the service, Google can use that without restriction. Keep that in mind.

However, paid Workspace terms still contain a critical restriction in Section 3.3: you must not "transmit, store, or process health information subject to United States HIPAA regulations except as permitted by an executed HIPAA BAA."

You must not transmit, store, or process health information subject to HIPAA regulations except as permitted by an executed HIPAA BAA.

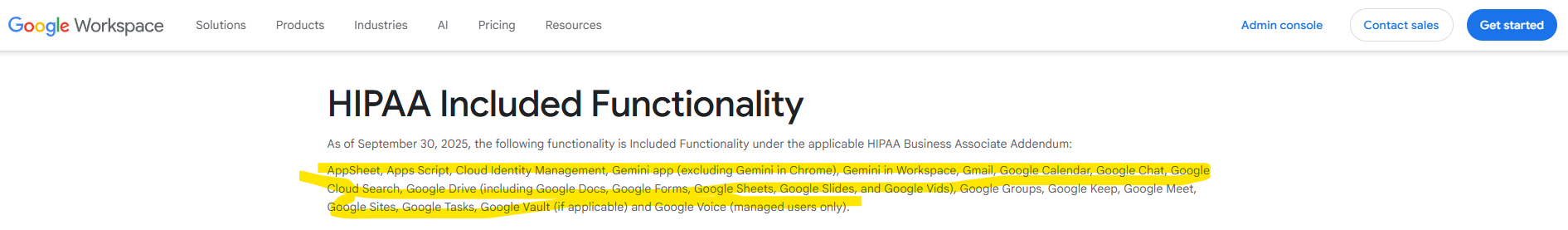

So the natural next question is: can you just sign a HIPAA BAA with Google and then use NotebookLM for health data? I went and checked. The answer is no as the HIPAA compliance does not cover Google NotebookLM product.

As you can see, the HIPAA included functionality does not list NotebookLM at all.

NotebookLM have inbuilt landmines like many other publicly available chat bots - the feedback button allows you to give up your data for them to train on their AI technology. It is also written in their terms and conditions. These feedback landmines (icon thumbs up or down) can not be turned off by default and is designed to be clicked on - a ticking time bomb waiting for you to accidentally provide feedback.

A Note on the "But We're Australian" Argument

HIPAA is American legislation, but Australian operators working with international frameworks, or organisations that choose to align with HIPAA as a gold standard, need to pay attention. More to the point: this clause signals Google's own intent about what these services are designed for. The spirit is clear even if the letter is jurisdiction-specific.

Australia's aged care and NDIS sectors operate under their own legislative frameworks — primarily the Privacy Act 1988, the Aged Care Act 1997, and the NDIS Act 2013 — rather than HIPAA directly. But the principle is identical: health information, disability-related data, and personal details about care recipients are among the most sensitive categories of personal information recognised in law. Organisations have strong obligations around how this data is collected, stored, used, and disclosed.

When a support worker or care coordinator pastes a progress note, a behaviour support plan excerpt, or a clinical summary into an AI tool, they are potentially disclosing personal health information to a third party. That disclosure needs to be authorised, purposeful, and protected under a clear data processing agreement. A commercial product's general terms of service — which permit the provider to use your content to improve their AI — does not constitute that kind of agreement.

Some Australians think that HIPAA is American, and we don't need to worry about it.

Well, first of all, the Australian Privacy Principles under the Privacy Act classify health information as "sensitive information" with the highest protection tier. Any unauthorised disclosure to a third party — including an AI provider — without participant consent or a lawful basis is a potential breach. The technology doesn't change the legal character of the act.

Second: if Google's own terms say "don't use this for health information without a BAA," and there is no BAA available for the tool in question, that is a clear and direct signal from the vendor about the appropriate use of their product.

If you are using Artificial Intelligence for managing sensitive information make sure you read the fine print of the technology provider’s privacy policy and terms & conditions, based on that, you have a better understanding of how the tech provider is using your data and assess the risk profile based on your organisational risk appetite.

How Carelogix is different.

We started Carelogix with a clear premise: the people who use our platform are support workers, coordinators, and care managers dealing with some of the most sensitive personal information that exists. The infrastructure they rely on has to be held to a standard that matches that responsibility.

Every piece of data processed or stored through Carelogix — including the AI layer — lives in Australia. This isn't a marketing claim about where the company is headquartered. It means the physical infrastructure for both data storage and AI processing is located on Australian soil. No cross-border transfers to US or other countries. No ambiguity about which jurisdiction's laws govern your data in transit.

For organisations operating under the Australian Privacy Act, the Aged Care Act, or the NDIS framework, this matters enormously. An AI system that routes participant data through overseas servers — even briefly, even "just for processing" — creates a cross-border disclosure that requires specific justification under the Australian Privacy Principles. We have removed that complexity entirely.

Every significant update is an invitation for new vulnerabilities. Our penetration testing programme treats it that way. The findings are reviewed internally at a leadership level, not delegated entirely to a dev ticket queue, because security posture is a product decision, not just a technical one.

All data stored in Carelogix is encrypted at rest. All data moving between your device, our application layer, and our storage infrastructure is encrypted in transit using current best-practice standards. This applies whether a support worker is logging a progress note on a mobile device in the field or a coordinator is reviewing a care plan from the office.

This is not exotic — it should be table stakes for any platform handling health information. We mention it because we've seen competitors treat it as optional, or implement it inconsistently across different parts of their stack. We pride ourselves in the work we do and for us it's basic data integrity and mandatory training backed by established security processes.

Reference

https://policies.google.com/privacy

https://policies.google.com/terms

https://cloud.google.com/terms

https://workspace.google.com/terms/premier_terms/

https://workspace.google.com/terms/2015/1/hipaa_functionality/?hl=en

Related Articles

NSW Innovation Blue Print Apr 2025

This Blueprint details how the Australia's Government is setting themselves up to support the local innovation ecosystem.

AI Privacy Guidelines and Care Service Innovation Jan 2025

The OAIC framework guide how organisations of various sizes utilise emerging technology with AI.